Living Archive

“Born-digital is a term derived from the field of digital preservation and digital heritage practises, describing digital materials that are not intended to have an analogue equivalent, either as the originating source or as a result of conversion to analogue form”

Breakell, Sue, ‘Perspectives: Negotiating the Archive’, in TATE Papers, Issue 9.

Archaeology of the term ‘born-digital’ on Virtueel Platform

Digital Preservation Coalition definitions.

Contributions to an Artistic Anthropology. Lev Manovich. NJP Reader #1, Excerpts

Lev Manovich is a Professor in the Visual Arts Department, University of California – San Diego, a Director of the Software Studies Initiative at California Institute

for Telecommunications and Information Technology (Calit2), and a Visiting Research Professor at Goldsmiths College (University of London), De Montfort University (UK) and College of Fine Arts, University of New South Wales (Sydney).

Academics, new media artists, and journalists have been writing extensively about “new media” since the early 1990s. In many of these discussions, a single

term came to stand for the whole set of new technologies, new expressive and communicative possibilities, and new forms of community and sociality which were developing around computers and the Internet…

I don’t need to convince anybody today about the transformative effects the Internet and the web have already had on human culture and society. What I do want to point out is the centrality of another element of the computer revolution which so far has received less theoretical attention.

This element is software.

I want to suggest that none of the new media authoring and editing techniques we associate with computers is simply a result of media “being digital.”

The new ways of media access, distribution, analysis, generation and manipulation are all due to software.

Which means that they are the result of particular choices made by individuals, companies, and consortiums who develop software.

Some of these choices concern basic principles and protocols which govern the modern computing environment.

The “cut and paste” commands built into all software running under Graphical User Interface and its newer versions (such as iPhone OS), for instance, or the one-way hyperlinks as implemented in World Wide Web technology.

Other choices are specific to particular types of software (e.g. illustration programs) or individual software packages.

If particular software techniques or interface metaphors which appear in one particular application become popular with its users, we may often see it appearing in other applications.

For example, after Flickr added “tag clouds” to its interface, they soon became a standard feature of numerous web sites.

The appearance of particular techniques in applications can also be traced to the economics of software industry – for instance, when one software company buys another company, it may merge its existing package with the software from the company it bought.

All these software mutations and “new species” of software techniques are social in a sense that they don’t simply come from individual minds or from some “essential” properties of a digital computer or a computer network. They come from software developed by groups of people and marketed to large numbers of users.

In short, the techniques and the conventions of the computer meta-medium and all the tools available in software applications are not the result of a technological

change from “analog” to “digital” media. They are the result of software which is constantly evolving and which is subject to market forces and constraints.

This means that the terms “digital media” and “new media” do not capture very well the uniqueness of the “digital revolution.” Why? Because the new qualities

of “digital media” are not situated “inside” the media objects.

Rather, they exist “outside” – as commands and techniques of media viewers, authoring software, animation, compositing and editing software, game engine

software, wiki software, and all other software species.

Thus, while digital representation enables computers to work with images, text, forms, sounds and other media types in principle, it is the software which determines

what we can do with them. So while we are indeed “being digital,” the actual forms of this “being” come from software.

Accepting the centrality of software puts into question a fundamental concept of modern aesthetic and media theory – that of “properties of a medium.” What does it mean to refer to a “digital medium” as having “properties”?

For example, is it meaningful to talk about unique properties of digital photographs, or electronic texts, or web sites?

Strictly speaking, it is not accurate.

Different types of digital content – images, text files, 3D models, etc. – do not have any properties by themselves.

What as users we experience as properties of media content comes from software used to create, edit, present and access this content.

It is important to make clear that I am not saying that today all the differences between different media types – continuous tone images, vector images, ASCII text, formatted text, 3D models, animations, video, maps, sound, etc. – are completely determined by application software.

Obviously, these media types have different representational and expressive capabilities;

they can produce different emotional effects; they are processed by different groups and networks of neurons;

and they also likely correspond to different types of mental processes and mental representations.

These differences have been discussed for thousands of years – from ancient philosophy to classical aesthetic theory to modern art to contemporary neuroscience.

What I am arguing is something else. On the one hand, interactive software adds a new set of capabilities shared by all these media types: editing by selecting discrete parts, separation between data structure and its display, hyperlinking, visualization, searchability, findability, etc.

On the other hand, when we are dealing with a particular digital cultural object, its “properties” can vary dramatically depending on the software application which

we use to interact with this object…

To summarize this discussion: there is no such thing as “digital media”. There is only software – as applied to media (or “content”).

To put this differently: for users who can only interact with media content through application software, “digital media” does not have any unique property by itself.

What used to be “properties of a medium” are now operations and affordances defined by software.

Introduction to sustainable archiving of born-digital cultural content. Annet Dekker, Virtueel Platform, Excerpts

Digitisation of archives has opened up possibilities for access to huge quantities of material, be it text, image or audiovisual. The heritage sector is increasingly aware of the value its archives have for a professional audience and the broader public.

They see the digitisation of their collections and the use of new techniques as improving access to their collections. In addition, cultural organizations increasingly recognize the value of recording, streaming online and archiving their conferences, performances and other live events, and of implementing content management systems that make this content accessible.

Archives are collective memory banks instead of state instruments.

Artists and cultural organizations alike face the challenge of developing sustainable, long-term systems to document and access their knowledge.

There is also a growing interest and awareness on the part of the general public about the perils of born-digital content.

Newspapers report about ‘online history facing extinction’, ‘seeking clarity on archiving e-mails’ and ‘forget storage if you want your files to last’.

All the above point to the need to understand the nature of this new type of material, or to put it simply: what does archiving mean in the Internet era?

Introduction to sustainable archiving of born-digital cultural content. Annet Dekker, Virtueel Platform, Excerpts

Digitisation of archives has opened up possibilities for access to huge quantities of material, be it text, image or audiovisual. The heritage sector is increasingly aware of the value its archives have for a professional audience and the broader public.

They see the digitisation of their collections and the use of new techniques as improving access to their collections. In addition, cultural organizations increasingly recognize the value of recording, streaming online and archiving their conferences, performances and other live events, and of implementing content management systems that make this content accessible.

Archives are collective memory banks instead of state instruments.

Artists and cultural organizations alike face the challenge of developing sustainable, long-term systems to document and access their knowledge.

There is also a growing interest and awareness on the part of the general public about the perils of born-digital content.

Newspapers report about ‘online history facing extinction’, ‘seeking clarity on archiving e-mails’ and ‘forget storage if you want your files to last’.

All the above point to the need to understand the nature of this new type of material, or to put it simply: what does archiving mean in the Internet era?

- Archives have the important task of saving cultural heritage from being lost forever

- What, indeed, is the nature of born-digital material and how can we analyze it?

- Should we prioritize the preservation of the computer programs designed especially to make these works accessible and legible over and above the evolution of software and hardware?

- Or do we need to find other methods such as recording, emulation and migration?

- And how can the contexts these works dealt with be preserved?

- Knowledge transfer is important but what does it mean – what is the significance and importance of knowledge transfer?

Report: Archive 2020 Expert meeting. Annet Dekker, Virtueel Platform

In May 2009 Virtueel Platform organized Archive 2020, an expert meeting that focused on the longevity and sustainability of born-digital content produced by cultural organizations or practitioners.

Group discussion:

Christiane Paul – Whitney Artport: Internet art in a museum context: preservation strategies and initiatives.

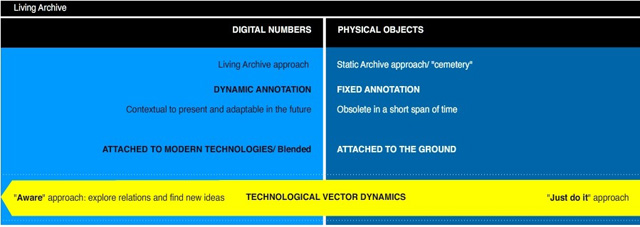

Eric Kluitenberg – The Living Archive: The Living Archive aims to create a model in which the documentation of ongoing cultural

processes, archived materials, ephemera and discursive practices is interwoven as seamlessly as possible. Approaching the ‘archive’ as a discursive principle.

Olga Goriunova – Runme.org: Looking at the archive as a process: ethical considerations when dealing with aesthetic and historical change.

Monika Fleischmann & Wolfgang Strauss – Netzspannung.org: The archive as a constantly living and growing entity, and the possibilities of using semantic mapping as a tool that organizes the content in new ways each time it is visited.

Esther Weltevrede: The appearance of a web archive when capturing hyperlinks, search engine results, and other digital objects.

Saving relevant aspects besides the digital document, and how to repurpose born-digital devices (search engines, platforms and

recommendation systems) for web archiving.

Aymeric Mansoux – art.deb: Using live distribution systems, repositories, virtual machines and servers as more stable and lasting infrastructures for software art.

Alessandro Ludovico – Neural: What individual and small archives can learn from shared collaborative platforms.

Biographies of the presenters

The term ‘born-digital’ refers to ‘digital materials that are not intended to have an analogue equivalent, either as the originating source or as a result of conversion to analogue form’.

The archiving of such content has received very little attention in the Netherlands, to the extent that, unless immediate steps are taken, we could soon talk of a ‘digital Dark Age’ in which valuable content is lost to future generations.

The aim of the expert meeting was therefore to examine existing examples of these types of archives and determine which issues need to be addressed if we are to champion their growth in the short and long term.

Some of the questions that Virtueel Platform raised included:

• Which born-digital cultural archives already exist and what lessons can we learn from them?

• Can a community establish its own archive without an institutional structure?

• Could a community-driven approach with social software help develop innovative strategies for group archiving?

• How can new and traditional tools best be merged to improve access and improve usability?

The first question concerned the nature of a born-digital archive:

• Does archiving born-digital works raise problems that require new solutions?

• What does the act of archiving mean in terms of activity, software support, etc.?

• Could we regard archiving as a process?

• Should digital archives set up a retention policy or should they keep all the content and metadata and invest in search engine technology?

• How can we safeguard and archive contextual information (the context in which the work came into being, was commented on, and contributed to)?

Not surprisingly, these questions gave rise to issues about the notions of visibility and accessibility of archives:

• How can the quality of content in new archives be ensured within the larger and mostly institutional discourse?

• Should special organizations be established to research and systematically document media art, i.e., organizations that bundle relevant information, or should this task be transferred to traditional institutes such as museums (which, in some countries, are not very open to born-digital artworks)?

• Can an archive survive outside the museum structure?

• How can we make archives more visible and increase access?

Related to this notion of accessibility was, of course, a concern about the dissemination of the content, ways of possible reuse, and the role communities could play in this process:

• How can the knowledge about archiving born-digital content and digital archiving be disseminated and be made available to professionals and laymen; in other words, how open can an archive be?

• Should we design archives that facilitate the re-use or remixing of material, or the creation of mash-ups? What examples already exist?

• What role can communities play in strengthening connections between archives?

• Will we ever find a way to build a global archive – and do we want to?

And, obviously, the most pressing concern is who will pay for this. How will these archives deal with their funding?

• How can financial stability be guaranteed for non-institutional and/or informal online archives and platforms?

• What can be learned from new funding models that differ from ‘traditional’ institutional or project-based funding?

‘There is an increasing overlap between the problems relating to the different types of archives (small, large, government – private, art, documentary, and audiovisual archives) with regard to storage, opening up and accessibility to the digital domain, which are, to a degree, becoming increasingly similar.

Issues such as authenticity and integrity, selection and documentation, reproducibility, recording interactivity, etc., impact on all areas and are bound up with the type of collection that is being managed, to a greater degree than in the analogue era.’

From Darwinistic Archiving to standardisation and DIY

A new strategy for the future re-creation of software art was suggested, aptly referred to as ‘Jack the Wrapper’, which would involve putting all the software in a box and describing and documenting the entire artwork so that it could be cloned in the future.

But, as was clearly demonstrated, not all born-digital material is easily documented and packaged. The term ‘Darwinistic Archiving’ was suggested, referring to the survival of the best – documented artworks.

Another strategy that some national archives use is ‘scanning on demand’, i.e., content is only digitized when someone asks for it. Issues of invisibility and choice will still remain, of course.

In order to highlight the problem a general call went out to write books and publish articles and reviews in magazine or newspapers:

‘the online’ has to become physical. This includes organizing exhibitions that will emphasize the urgency of preservation.

*For example, the 2004 Guggenheim exhibition Seeing Double and the To clean or not to clean – schoonmaken van kunstwerken op zaal exhibition at the Kröller-Müller Museum, the Netherlands, in 2009.

There was also a call to change the term ‘digital preservation’ into ‘permanent access’, which might provide an impetus to the

understanding and importance of the work. Everyone was in favour of devoting more attention to presentation and exposure.

- Sustainability

A certain level of centralisation was seen as important, if only to clarify and distribute responsibilities.

Another approach to explore would be to integrate archiving into existing institutions and have them apply for project funding.

While this strategy has many positive aspects it is important that everyone in the institute is aware of the activities involved in a project

and that it is not in the hands of one (enthusiastic) individual.

Moreover, the procedures to follow if funding stops must be unambiguous. Another strategy was to distribute the work as much as possible: think of remixing strategies and an approach Kevin Kelly calls ‘movage’: the more it is out there, the more it is seen, and the better it is archived.

Although contested, standardisation should also be considered. The same indexing standards will improve access, but an international task force is needed to deal with this. Instead of traditional methods one could investigate strategies similar to the Wikipedia model.

- Responsibility

who is responsible and what is the role of the artist, programmer, curator, museum and audience?

A suggestion was made to increase the responsibility of the artists and make them aware of the problem by introducing preservation strategies into funding applications, for example. However, referring to Darwinistic Archiving, this raises the question of what is considered more important: the quality of the work or the preservation strategy.

Institutes and museums, or even universities, who have more resources and expertise, should receive more attention.

These institutes could assume a coordinating role, so that smaller organizations or individuals can participate.

A ‘funding for research’ approach was suggested to make it beneficial for both sides. Because the web is made by individuals and not by organizations the network community and users appealed for a strategy to mobilise these people and make them aware of their self-sustainability. In some cases, this would also better reflect the origin and process of the work.

- Urgent actions have to be taken

Central to the discussions at the meeting was the participants’ high level of commitment and their sense of urgency, and there was a general agreement that the primary focus should be on:

- Raising awareness:

About the websites of artists, curators, organizations, museums as well as of funding bodies;

- Funding:

Preservation strategies could be included in funding applications. ‘Artists should make digital wills’;

- Accessibility, open standards:

Because most institutions have specific demands, open source software can provide the flex ibility that is needed in the field as well as provide a sound basis with universal standards;

- Knowledge sharing:

Also between different disciplines ( music, broadcasting, gaming, science, oral history );

- Research and best practice:

Examine existing archives and how they function, as well as publish examples of best practices and

unsuccessful strategies. This will create a shared knowledge base and foster the learning process;

- Presentation:

Create urgency by showcasing, presenting and publishing.

In the end it is vital that each work has more possibilities for presentation.

Make the field visible.

From Sustainable archiving of born-digital cultural content. Virtueel Platform